BLOG

Data Center Cooling Group Control Functions

SEPTEMBER 8, 2025

|

by Chris Soh, Product Manager IT Cooling Systems |

In data centers, where multiple cooling units operate simultaneously, effective coordination is essential to maintain thermal stability and energy efficiency.

Traditionally, this coordination, or “group control,” relied on centralized building management systems (BMS) and custom programming—adding complexity and cost.

Advanced cooling systems offer a simpler approach: peer-to-peer communication built directly into the equipment.

Instead of a central controller, individual units communicate over a shielded twisted-pair bus, working together as a unified system.

This level of communication within the group control system allows them to share data, adjust performance, and respond dynamically to changing conditions—without relying on external controls.

This capability is now highly sought after in precision cooling units. Features like shared setpoints, standby unit rotation, and average temperature control are managed internally, eliminating the need for third-party integration.

How Peer-to-Peer Group Control Works

Typically, each cooling unit includes a built-in controller that networks over a shielded twisted-pair link. When daisy-chained on this bus, the units form a peer-to-peer control network that enables:

- Peer-to-peer communication: Units exchange data directly without relying on a central hub.

- Distributed control logic: Decision-making is shared across units, allowing faster and more flexible responses.

- Real-time data sharing: Units constantly update one another with current operating status and environmental conditions.

- Automatic redundancy and load rotation: Standby units are rotated and activated as needed to balance workload and ensure continuous operation.

Together, these features transform individual units into a single, intelligent cooling network—improving operational efficiency, system resilience, and control precision without relying on external supervisory systems.

Key Features of Group Control Systems

Group control systems improve operational coordination with features like:

- Automatic Master Unit Assignment: If the designated master unit goes offline due to an alarm, shutdown, or failure, another unit automatically takes over to maintain uninterrupted operation.

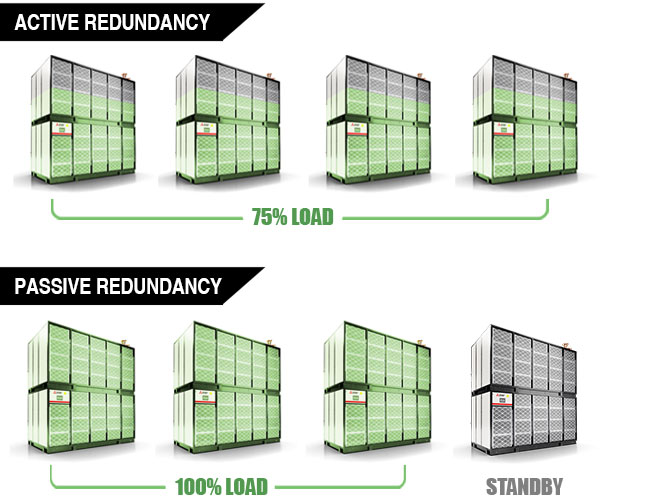

- Standby Rotation & Activation: Standby units cycle periodically to balance operating hours. When an active unit becomes unavailable or exceeds temperature limits, a standby unit automatically activates. This is essential for optimal data center cooling unit load sharing.

- Average Temperature Control: Average Temperature Control regulates cooling performance by monitoring the average return air temperature across all active units, rather than allowing a single outlier sensor to dictate system behavior. This prevents overcooling from inaccurate or localized readings, keeps conditions balanced across the space, and improves part-load efficiency by aligning output more closely with actual demand.

Units connected on the shielded twister-pair bus can work collectively to condition the IT environment and respond to isolated cooling needs without expending excessive energy across the entire space. Units with multiple fans, like the MEWALL, offer additional airflow shaping capability to precisely target hot spots.

MEWALL: Redefining Group Control

More advanced systems, such as Mitsubishi Electric’s MEWALL include additional capabilities to further optimize efficiency and reliability:

Enhanced Airflow Standby Mode

Even when not actively cooling, standby units run fans at low speed to maintain airflow and reduce response time when activation is needed.

Active Load Distribution (ALD)

Instead of shutting down unused units, ALD distributes the cooling load by running all units at lower speeds, leveraging energy savings due to the nonlinear relationship between fan speed and power consumption.

This reduces overall energy use, noise, and wear on equipment. If a unit fails, others automatically increase speed to maintain cooling capacity.

Localized Hot & Cold Spot Adjustment

Units detecting temperature deviations beyond a threshold can temporarily fine-tune their operation to correct localized imbalances without disrupting the group’s overall coordination. Once conditions stabilize, the unit seamlessly reintegrates into the group.

Averaging temperature control can mask local hot spots—if part of the data center is running hotter, its effect is diluted in the average. This means the system may never detect the issue, which risks inefficiency or, in the worst case, equipment failure and downtime.

With H&L local temperature protection in MEWALL group control, this risk is eliminated.

In the example shown above, Units #4 and #9 both register internal temperatures above the threshold. They are automatically disconnected from the group and excluded from the average, ensuring their hot spots aren’t overlooked. Each unit resolves its local condition independently, then reconnects once stable—maintaining efficiency and protecting uptime.

Active Pressure Load (APL)

Fan speeds adjust across units to maintain consistent static pressure in plenum or ductwork, balancing airflow, reducing energy waste, and extending component life.

The APL function ensures that all units in a group adjust their fans consistently, based on pressure readings collected across the group. Each unit has a pressure sensor (transducer) and shares its readings with the designated client unit.

In the example below, Unit #5 is the master unit (client), while Unit #1 reads 20.2 Pa and Unit #6 reads 20.4 Pa.

The client collects these values and calculates a target pressure (average, minimum, or maximum—depending on the setting). In this case, the average value of 20 Pa is selected.

Once the calculation is complete, the client communicates the fan adjustment to all units in the group, so their fans run at the same speed. This keeps the system synchronized and avoids situations where some fans are overworking while others are underworking.

If one or more units reconnect to the network, the client resynchronizes the group by briefly setting all fans to minimum speed before applying the updated adjustment. You can also choose how the fans respond:

- Control mode: fans can increase or decrease speed based on pressure.

- Limit mode: fans can only increase speed if needed.

Summary

Peer-to-peer group control has become a core capability in modern data center cooling—offering a smarter, more scalable way to manage multiple units as one cohesive system.

Built directly into the equipment, it streamlines setup, eliminates the need for custom programming, and removes single points of failure.

The result is faster deployment, simplified operation, and more reliable performance across the board.

Key Benefits

- Energy Efficiency: Intelligent coordination optimizes fan speeds and reduces overcooling—cutting power consumption and operating costs.

- Enhanced Reliability: Automatic failover and built-in redundancy keep systems running, even during faults or maintenance.

- Simplified Operation: A unified control interface offers centralized visibility, making it easier to monitor, adjust, and respond.

- Scalability: New units integrate seamlessly, supporting future growth without rework or downtime.

As data centers evolve, peer-to-peer group control is increasingly recognized as a key enabler of efficient, resilient, and simplified cooling systems.

Advanced solutions like MEWALL offer enhanced capabilities that improve performance, flexibility, and responsiveness—providing a strong foundation to meet today’s critical infrastructure demands.

Up Next

Cooling today is no longer about “chilling the room.” Instead, it’s about delivering precise, responsive, and efficient cooling at the rack, aisle, and zone levels. Explore MEWALL’s Remote Sensor System

Edited by Matt Slippy, Marketing Specialist & Nicole Kristof, Senior Marketing Specialist

Stay up-to-date on industry trends & insights

Get the critical power insights you need to keep your business from going dark.

By submitting this contact form, you agree that a representative(s) of Mitsubishi Electric Power Products, Inc. (MEPPI) may contact you using the information you provided. In accordance with our Privacy Policy, we will never share or sell your personal data.